TL;DR: Most ICPs are built using surface-level filters like industry, company size, or revenue. That helps identify companies that look similar, but not necessarily companies facing the problems your product solves best. Two accounts can look nearly identical on paper and still be very different customers. The strongest GTM teams go beyond firmographics and think more deeply about operational context, pain points, goals, and where their product creates disproportionate value.

Q. Why is pipeline an incomplete GTM metric?

A. Because pipeline is an outcome, and outcomes are influenced by factors outside a GTM team’s control. Decision quality is what they control: account selection, buying group coverage, timing, and context - and these shape the probability of pipeline generation.

Q. What is decision quality in GTM?

A. Decision quality refers to the quality of upstream, i.e. pre-pipeline GTM decisions made before execution begins, including who to target, when to engage, which stakeholders matter, and what context informs outreach.

Q. What is pre-pipeline?

A. Pre-pipeline refers to the upstream decisions that shape pipeline before outreach begins.

In 1964, a Stanford professor named Ronald Howard coined a term that would change how serious organizations think about choices. He called it decision analysis, and its foundational principle is simple: You cannot judge the quality of a decision by the quality of the outcome that follows it.

You can have good decisions with bad outcomes, and bad decisions with good outcomes. It's a logical mistake to say "I got a good outcome, therefore I made a good decision."

Howard spent the next six decades at Stanford building a rigorous science around this insight. He deliberately avoided naming his field "decision engineering" because that phrase suggested trying to force decisions to come out a certain way. Outcomes, he understood, involve factors outside any decision-maker's control. What you can control, and therefore what you should be judged on - is the quality of the process that produced the decision.

It is a principle most organizations acknowledge in theory. Let’s see how to apply it in practice.

The GTM version of this problem

In B2B go-to-market, teams are almost universally measured on outcomes. Pipeline generated, meetings booked and deals closed. These are the numbers that matter on the dashboard, and in the board deck. The proof is in the pudding, right?

The problem is when outcomes become the only measure - when the pressure to show results pushes us to skip the decisions that determine whether those results are achievable in the first place.

- Which accounts should we be working right now, and why?

- Which stakeholders matter in this deal, and have we reached all of them?

- Is this the right moment to engage, or are we reaching out because the sequence says it’s time?

- What context has changed at this account, and does our message reflect it?

While these are treated as execution questions, in reality these are upstream decisions. And in most GTM motions, they happen by default - because there is no system for making them deliberately.

The result is what Howard would have recognized immediately: outcomes that look random, because the decisions behind them were random. Pipeline that feels unpredictable, because the inputs that shape pipeline were never treated as inputs worth improving.

Luck and skill are not the same thing

Howard's framework holds that a decision's quality comes from the information gathered and used at the time of the decision - and that outcomes should be accepted as the result of a probability distribution.

Howard was not saying outcomes don't matter - he was saying that conflating outcome quality with decision quality is a reasoning error that leads organizations to draw the wrong conclusions from both success (wins) and failures (losses). You don’t fully control buyer politics, competitive dynamics and predatory pricing. What you can control is the quality of the decisions that precede execution. And better upstream decisions, made consistently, are the most reliable path to outcomes that don't feel like luck.

A deal closes - was it because the account was well-chosen, the buying group fully mapped, the timing informed by a real signal? Or was it because the champion happened to be in the market regardless, and the sequence landed on a lucky day? If you don't know, you can't improve.

A deal stalls - was it because the product wasn't right for the account? Or because the economic buyer was never engaged, the message never adapted to their role, and the outreach was single-threaded into someone who couldn't champion the deal internally? If you don't know, you'll optimize the wrong thing.

The GTM function has a systematic version of this problem. When pipeline misses, the instinct is to add more activity: more sequences, more contacts, more channels. When pipeline hits, the instinct is to repeat the motion. Both responses miss looking at what actually drove the outcome, and both are, in Howard's terms, a failure to think clearly about decisions.

What is decision quality in GTM?

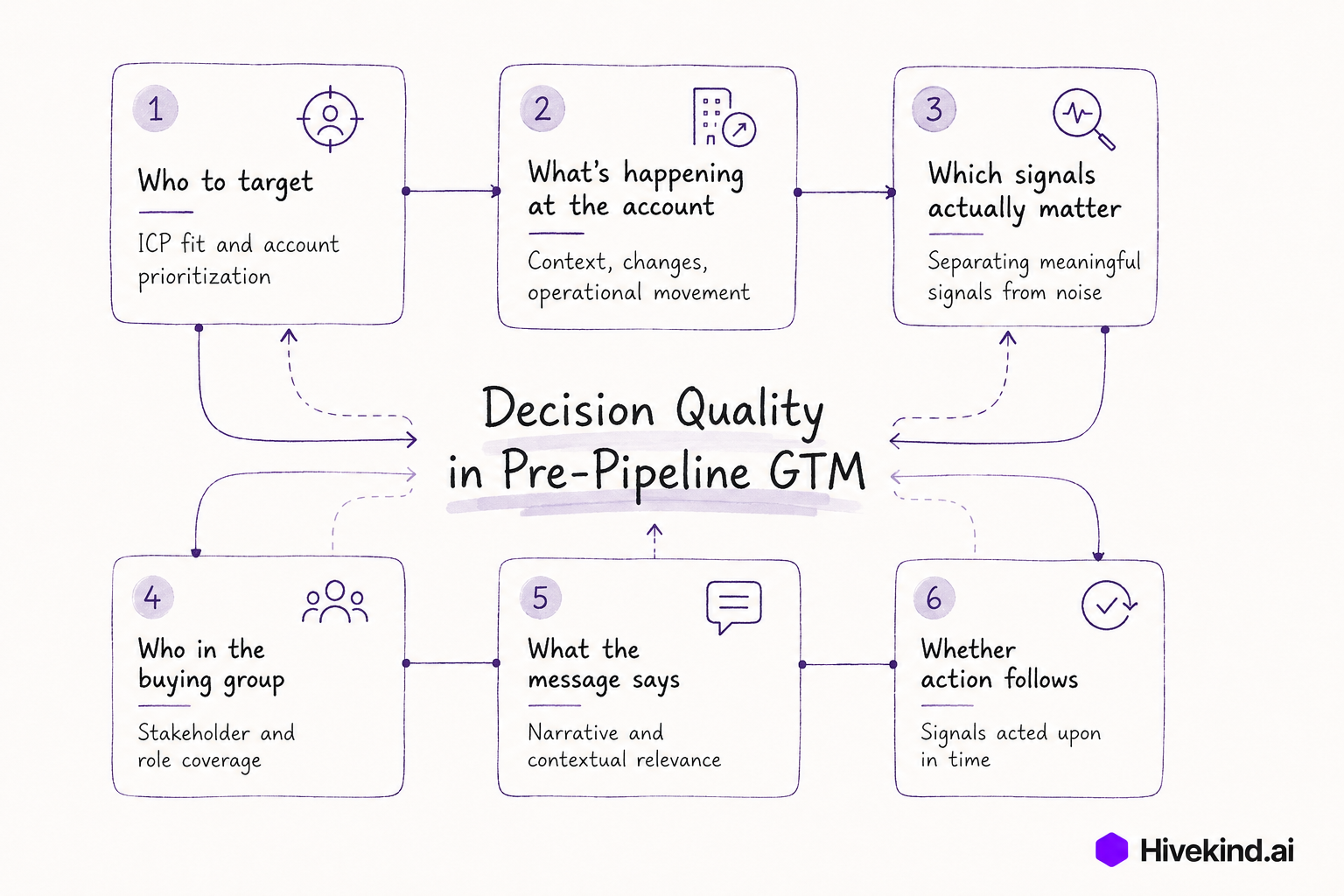

Howard's framework for decision quality has six requirements: an appropriate frame, creative alternatives, relevant and reliable information, clear values and tradeoffs, sound reasoning, and commitment to action.

These six requirements map cleanly to the upstream decisions most GTM teams skip, or make by default:

Who to target: ICP prioritization isn't a one-time exercise. Markets shift, signals change, and the accounts worth working this week aren't the same as last quarter's list. A clear frame means continuously asking: are we working the right problem right now?

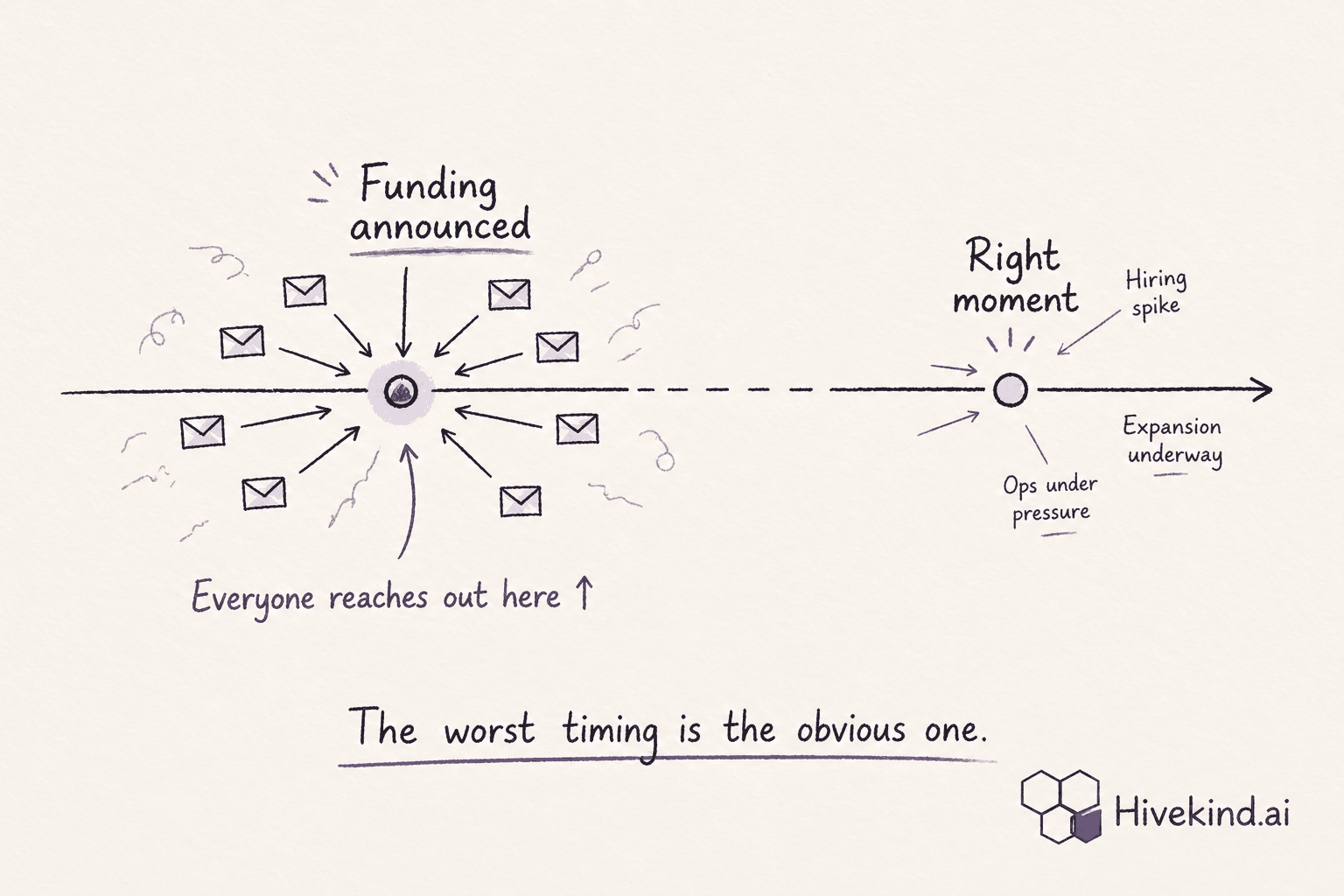

What's happening at the account: relevant and reliable information means knowing what's actually changed at a target account. A leadership hire, a funding round, a product launch - these alter the relevance of outreach in ways a static account list can't reflect.

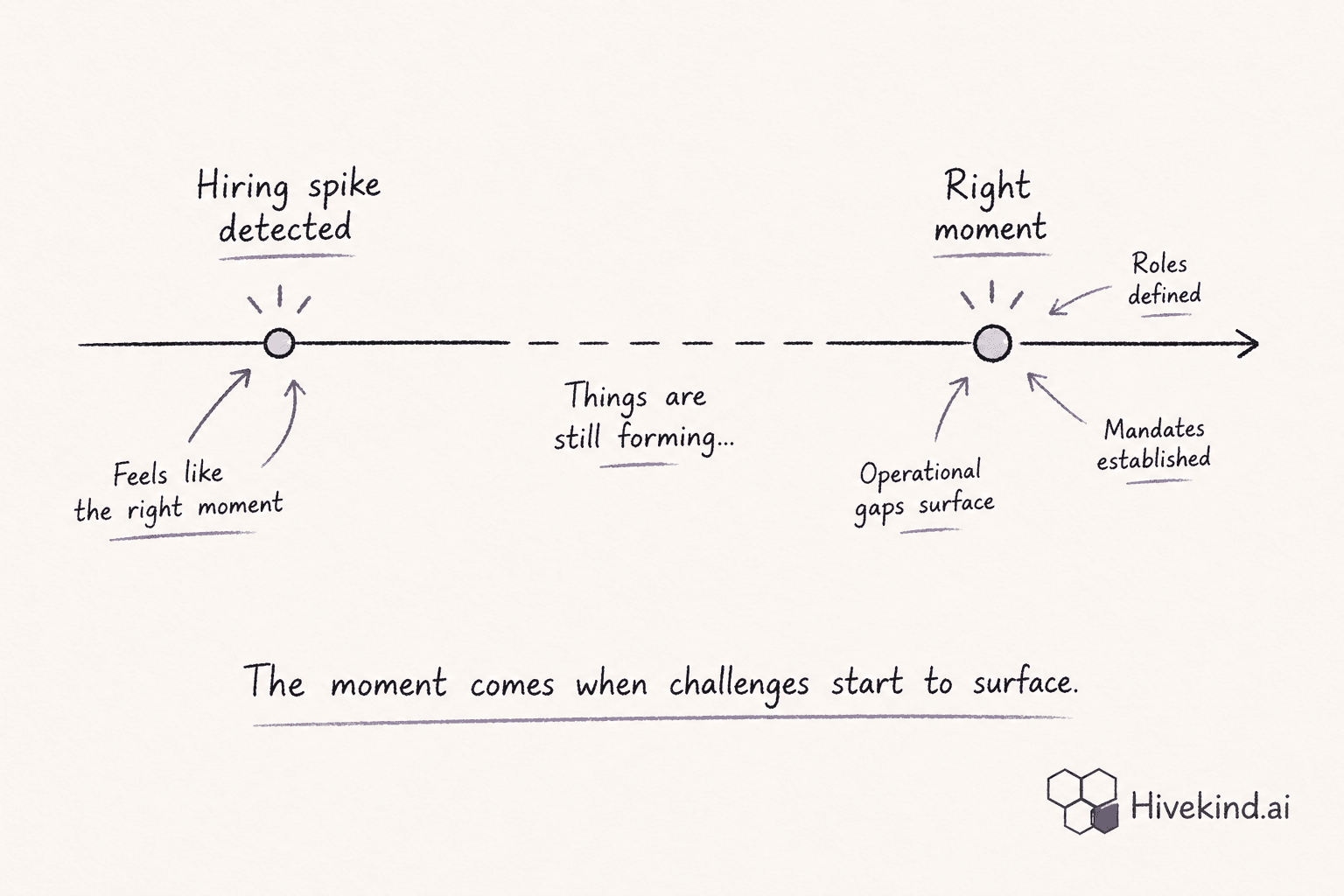

Which signals actually matter: not all signals are equal for every ICP. A hiring spike is significant for one company's GTM motion and noise for another's. Filtering signals to what's relevant for your specific context is itself a decision — and one most teams don't make deliberately.

Who in the buying group: clear values and tradeoffs means knowing which stakeholders deserve attention and in what order. Most teams reach the champion and hope for the best. The economic buyer, the technical evaluator, and procurement have different motivations and different entry points.

What the message says: sound reasoning means the message reflects what you actually know about this account and this role. Not a template with a first name. Not generic pain points. Something that demonstrates you understood the context before you reached out.

Whether action follows: commitment to action is the most important and most broken requirement in GTM. When a signal fires, does outreach change? Or does it stay in a dashboard until the window closes?

These may seem aspirational, but they're the upstream decisions that determine whether downstream execution produces results consistently.

Decision quality in GTM includes:

- account prioritization

- buyer group mapping

- signal interpretation

- timing decisions

- contextual messaging

A different way to think about what GTM tools should do

Most GTM tools are measured on outcomes and these are not wrong measures. But they are incomplete, for exactly the reason Howard identified: outcomes involve factors outside the tool's control. The weight of the brand, the relevance of the product to the market at this moment, the skill of the sales team in converting opportunities, the competitive environment, the buyer's internal politics and decision making.

A tool that promises outcomes is making a claim it cannot fully keep, and in doing so, it obscures the more honest and more useful value: that it improves the quality of the decisions that precede execution.

Better upstream decisions improve the probability of better outcomes. That is a more rigorous claim, and one that decision science has been making, and proving, since 1964.

We built Hivekind around this idea. It’s not a philosophical position, but a practical one: the pre-pipeline decisions that determine which accounts get worked, when, covering whom, and with what context are improvable. And improving them systematically is the most reliable path to pipeline that doesn't feel like luck.